Displaying items by tag: xml

Automated FMU Generation from UML Models

Automated FMU Generation from UML Models

Introduction

The simulation of cyber-physical systems plays an increasingly important role in the development process of such systems. It enables the engineers to get a better understanding of the system in the early phases of the development. These complex systems are composed of different subsystems, each subsystem model is designed with its own specialized tool sets. Because of this heterogeneity the coordination and integration of these subsystem models becomes a challenge.The Functional Mockup Interface (FMI) specification was developed by an industry consortium as a tool independent interface standard for integration and simulation of dynamic systems models. The models that conform to this standard are called Functional Mockup Units (FMU).

In this work we provide a method for automated FMU generation from UML models, making it possible to use model driven engineering techniques in the design and simulation of complex cyber-physical systems.

Functional Mockup Interface

The Functional Mockup Interface (FMI) specification is a standardized interface to be used in computer simulations for the creation of complex cyber-physical systems. The idea behind it being that if a real product is composed of many interconnected parts, it should be possible to create a virtual product which is itself assembled by combining a set of models. For example a car can be seen as a combination of many different subsystems, like engine, gearbox or thermal system. These subsystems can be modeled as Functional Mockup Units (FMU) which conform to the FMI standard.The Functional Mockup Unit (FMU) represents a (runnable) model of a (sub)system and can be seen as the implementation of an Functional Mockup Interface (FMI). It is distributed as one ZIP file archive, with a ".fmu" file extension, containing:

- FMI model description file in XML format. It contains static information about the FMU instance. Most importantly the FMU variables and their attributes such as name, unit, default initial value etc. are stored in this file. A simulation tool importing the FMU will parse the model description file and initialize its environment accordingly.

- FMI application programming interface provided as a set of standardized C functions. C is used because of its portability and because it can be utilized in all embedded control systems. The C API can be provided either in source code and/or in binary form for one or several target machines, for example Windows dynamic link libraries (".dll") or Linux shared libraries (".so").

- Additional FMU data (tables, maps) in FMU specific file formats

The inclusion of the FMI model description file and the FMI API is mandatory according to the FMI standard.

Tools

Enterprise Architect is a visual modeling and design tool supporting various industry standards including UML. It is extensible via plugins written in C# or Visual Basic. The UML models from which we generate our FMU are defined with Enterprise Architect.Embedded Engineer is a plugin for Enterprise Architect that features automated C/C++ code generation from UML models.

We further used the FMU SDK from QTronic for creating the FMI API. It also comes with a simple solver which we used to test our solution.

Running Example

Our basic example to test our solution is called Inc. It is a simple FMU with an internal counter which is initialized at the beginning and it increments this counter by a specified step value, each time it gets triggered, until a final to value is reached or exceeded.State Machine

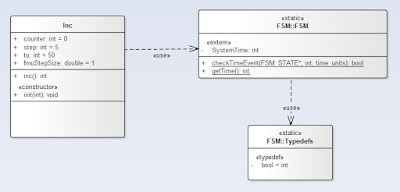

The state machine contains just an initial pseudo state which initializes the state machine and a state called Step. The Step state has two transitions, one transition to itself, in case the counter is still lower then the to value, if this is the case, the inc() operation will be called and we are again in the Step state. If the value is equal or greater to the to value, it reaches the final state and no further process will be done.Class diagram

The class diagram consists of two parts. The left part with the Inc class is project specific. It holds three attributes: counter, step and to. All attributes are of type int. The initial value for the counter is 0, for the step it's 5 and for the to value it's 50. The FSM classes on the right are the mandatory classes for the Embedded Engineer to be able to generate the state machine code.Some specific implementation code also exists in various places. In the state machine you can see, that we have some guards on the transitions. These guards are actually code that will be used to generate the code for the state machine:

me->counter < me->to

and

me->counter >= me->to

The property me represents a pointer to an instance of the Inc class.

And finally the implementation of the inc() operation is also provided:

me->counter = me->counter + me->step;

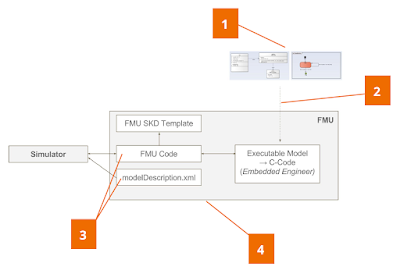

Manual Code Generation

First we manually created our reference Inc FMU, the following steps where taken:- UML models were defined in Enterprise Architect (class diagram and state machine diagram)

- C code was generated from the previously created models (with the Embedded Engineer plugin)

- The FMI model description xml file and the FMI API were created by hand

- The (compiled) FMI API was packaged together with the model description file into a FMU file. This was done with a batch script.

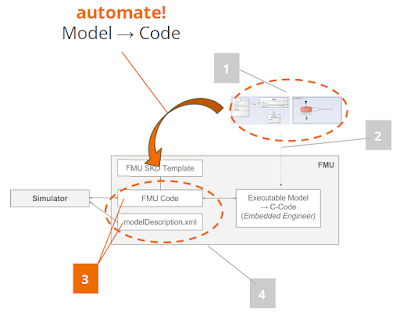

Automatic Code Generation

Now we want to automate the creation of the FMI model description file and the FMI API. For this purpose we wrote our own Enterprise Architect plugin. To be able to generate semantically correct FMI model description and FMI API artifacts, we attached additional information to the UML models. This was achived through the definition of various UML stereotypes for UML class attributes and methods. Since the FMI defines its own data types we also had to map the data types used in the UML models to the corresponding FMI data types. With these challenges addressed we were able to implement our FMU generation plugin.Future Work

Our work comprises a fundamental prototype that is only a start and could be improved in various ways. The following list describes some issues that could be tackled.- One limitation of the current implementation is that we are not able to provide initial values for the FMU. Consequently, to test different configurations of our FMU, we always have to set the default values in the UML models and regenerate the FMU for the simulator again. Hence, future work includes creating new stereo types for initialization of FMU settings/variables and testing these bindings.

- We used the FMU SDK simulator for this project. Other (more powerful) simulators should be tested too. Furthermore, co-simulation with other FMUs needs to be tested.

- In our project we restricted ourselves to just look at discrete functions by using the event system of the FMU. To continue the journey we also have to consider continuous functions.

- More complex examples should be considered to test the capabilities of the automatically generated code. By creating more complex examples the practical limitations of modeling a FMU with a class diagram and a finite state machine need to be discovered. Questions like "What can be implemented?" and "What can not be implemented?" need to be answered.

- The automated code generation process could be reduced to a one-click functionality to directly generate the ".fmu" file without any additional compilation and packaging step necessary.

Acknowledgement

Screencast

Threatscapes in Focus: How NIEM supports Law Enforcement

Security - A Shared Concern

In July 2016, Sparx Systems attended the Australian National Security Summit as a key sponsor. What struck one about this conference, was the chorus of concern from the speakers, for collaboration and integration. In every presentation the shared concern was for the ability for different people in the same or geographically dispersed locations, to share information. Joining the dots, so as to be able to identify patterns or connections, in what appear as isolated or unrelated events that could present a threat to security.

In his presentation, 'Asia Pacific to 2025 - Challenges and Opportunities' Peter White from the Australian Department of Infrastructure and Regional Development, emphasised that to maintain aviation security in our region there was a “need to work collectively and cooperatively with regional partners”. Detective Inspector Glyn Lewis, National Coordinator Cyber Crime Operations, from the Australian Federal Police spoke of “collaborating with our international law enforcement partners” in his presentation 'Tackling the challenge of Cyber Crime in an ever changing landscape'.

In the same month, at a meeting of the North Atlantic Council in Warsaw, participating Heads of State and Government committed to enhance resilience, in facing “a broader and evolving range of military and non-military security challenges.” The statement went on to say, that being resilient against these challenges requires Allies “to work across the whole of government and with the private sector.” Resilience also requires that the Alliance continues “to engage, as appropriate with international bodies particularly the European Union, and with partners.”

Sharing Information

In the international security network today, the message to increase collaboration and integration for information sharing is consistent. The Information Sharing Environment (ISE) was established by the United States Intelligence Reform and Terrorism Prevention Act of 2004 and provides analysts, operators, and investigators with the information needed, to enhance national security.

In 2001, a handful of organizations, collectively known as the Global Justice Information Sharing Initiative, started to create a seamless, interoperable model for data exchange to overcome the challenges of exchanging information, across state and city government boundaries. The first pre-release of the Global Justice XML Data Model (GJXDM), a foundational predecessor and building block of NIEM, was announced in April 2003. Parallel to the GJXDM effort, the U.S. Department of Homeland Security (DHS) began working on standards for metadata and these efforts by the justice and homeland security communities, led to the beginnings of the National Information Exchange Model (NIEM).

National Information Exchange Model

NIEM was formally initiated in April 2005 by Department of Homeland Security and Department of Justice, uniting key stakeholders from federal, state, local, and tribal governments, to develop and deploy a national model for information sharing and the organizational structure to govern it. A standards-based approach to exchanging information, NIEM enables communication between systems, even if they have never communicated before, while ensuring that information is well understood and carries the same consistent meaning, across various communities supporting interoperability.

NIEM standardizes the semantics describing the data, so that the underlying information is exchanged between jurisdictions seamlessly, accurately and – when fully implemented – without delay.

For example, databases in one American state may refer to a person in jail as a prisoner, while another refers to someone in identical circumstances as an inmate. If the federal government wants to query databases in both states to ask if a particular person is in jail, all three need to agree on identical, standardized language. NIEM enables individual agencies and sectoral domains to map their language, to the terminology set out in the NIEM standards.

Joining the Dots for a Clearer Picture

In the poem by John Godfrey Saxe , six visually impaired men provide their individual descriptions of an elephant by touching the animal. In their conclusions “each was partly in the right And all were in the wrong.” The message of the story is that when not coordinated, the investigations of a system components and the relationships between them, prevents shared understanding of the overall picture, which can lead to serious misinterpretations, based on a lack of information.

The brilliance of the standards creation effort is, that while standards codify best practices, for the benefit of all stakeholders, they also reduce or eliminate those differences between individual stakeholders, that would hold them in silos and place them collectively at a competitive disadvantage. In the context of collaboration and integration, security standards eliminate the weak linkages in the chain.

The ISE SAR Functional Standard exemplifies this value proposition. It supports improved information sharing and safeguarding capability, enabling community members to better plan and execute initiatives.

Suspicious Activity Reporting (SAR) is a common police procedure for recording observations from patrol shifts. The different personnel capturing the information, varied locations, formats and definitions has raised the question as to how best to use the data. How will different people in the same or different locations join the dots, to identify patterns or connections in what appear as isolated or unrelated events?

In the past, Local, State and Federal systems were not designed to interoperate. In fact, in some states, it was illegal to share information with the federal government. Incompatible computer systems compounded the siloed nature of information. Most people are familiar with the stories of emergency vehicles turning up at the wrong address due to incompatibility between the databases of different local authorities.

Communities of Interest

The Nationwide SAR Initiative (NSI) is a partnership among state, local, tribal, and federal agencies, including the Bureau of Justice Assistance, Office of Justice Programs, U.S. Department Of Justice (DOJ); the Program Manager for the Information Sharing Environment; the U.S. Department of Homeland Security; the Federal Bureau of Investigation’s eGuardian; the Global Justice Information Sharing Initiative; and the U.S. Department of Defense.

The Major Cities Chiefs Association (MCCA), the Major County Sheriffs’ Association, the National Sheriffs’ Association, and the International Association of Chiefs of Police (IACP) all unanimously support the SAR (Suspicious Activity Report). This is what is called a NIEM Community of Interest (CoI). These NIEM domains each have an executive steward, to officially manage and govern a portion of the NIEM data model.

With the development of XML, departments were able to exchange information legally while maintaining their own legacy systems naming conventions and exchange information using a metadata dictionary. For instance, agreeing to use the term “car” instead of automobile or vehicle, allowed different entities to share information without changing their own departmental language.

Over the last decade, the NIEM (National Information Exchange Model has become a significant new resource for information sharing. In 2007 the various stakeholders in different departments got together to discuss how to standardise information sharing and in due course, defined the elements of the SAR Information Exchange Package Documentation or IEPD. 16,000 data elements from various sources were collected analyzed and reduced to around 2,000 unique data elements which were incorporated into about 300 reusable components, resulting in the Global Justice XML Data Dictionary (Global JXDD). The Global JXDD components were accessible from multiple sources and resulted in increased interoperability, throughout different justice and public safety information systems.

Using the JXDD would define the terms that would be used to compose a SAR, wherever it was used. In 2008, the office of the Program Manager for the Information Sharing Environment issued the ISE SAR Functional Standard codifying the SAR IEPD.

Painting the Picture

The IEPD is a data dictionary that allows agencies to validate data exchanged in reports or queries. It provides a clearly defined path for the development of an exchange model and a reusable basis for any new system to join the same exchange. When speaking about how the SAR IEPD enabled agencies to connect unrelated events, Los Angeles Police Department Commander Joan T. McNamara commented, “This paints an amazing picture in real time.”

Non-experts can develop NIEM-conformant messages if required and watch officers, analysts, and scientists can read and interpret those messages, even if they are sent machine-to-machine.

Sparx Systems Support for NIEM

The NIEM-specific UML profile – which enables data exchanges to be modeled in tools like Sparx Systems Enterprise Architect – was released by the OMG in 2013. A recorded webinar (March 2016) which examining the benefits of using Enterprise Architect to model and define information sharing using the NIEM standard, is available on YouTube videos from Sparx Systems and can be viewed here:

Sparx Systems Enterprise Architect supports the representation of the NIEM as a Unified Modeling Language (UML) profile, providing the ability for users to automatically produce NIEM-conformant XML schema. The MDG Technology for NIEM facilitates the creation and development of IEPD models, by providing starter models, model patterns and a number of toolboxes, for creating IEPD models and schema models.

All of the NIEM specifications and naming rules are written into the NIEM-UML profile now built in in Enterprise Architect. The challenge of building a NIEM exchange for an organization is now simplified and automated, enabling architects and developers to finish sooner with a smaller budget and better quality assurance.

Complete IEPDs can be generated from IEPD models and NIEM conformant schemas from information models. NIEM Reference Schema can be imported into the model and NIEM subset namespaces, composed from elements of the NIEM Reference Schemas can be created along with PIM, PSM and Model Package Description (MPD) diagrams, using the NIEM Toolbox pages. By using the Schema Composer, subsets of the NIEM -core reference schema can be easily created, eliminating time consuming human error.

Sparx Systems Enterprise Architect is featured on the NIEM Tools Catalog.

Register Now - Webinar Enterprise Architect 12 Release Highlights

Enterprise Architect 12 is a major milestone release of Sparx Systems' award-winning modeling platform. Significant user interface enhancements make it easier to navigate the model, access element properties and create a personalized look and feel. Hundreds of updates and new tools make your modeling more productive than ever!

Scott Hebbard and Ben Constable review some release highlights:

- New tools for wireframing, database engineering and XML schema development

- Enhanced Testing, Project Management and superior Model Merge

- Major UI updates and faster navigation with Portals

To suit users in different time zones, we will hold two sessions – each 60 minutes in duration.

http://www.sparxsystems.com/resources/webinar/release/ea12/enterprise-architect-12-overview.html