Author: Nya A Murray, Chief Architect,Trac-Car .

Date: 23rd January 2010

Website: http://www.trac-car.com

Email: This email address is being protected from spambots. You need JavaScript enabled to view it.

1 Executive Summary

Corporations and governments around the world are starting to collect information from telemetry devices, in the fields of

1. Water supply

2. Transport

3. Electricity

4. Manufactured goods

5. Agriculture

Valuable information can be produced from telemetry data, about resource utilization and associated carbon emissions in all of these fields.

Climate change is definitely an imperative. Telemetry data is a critical input into providing accurate global environment information, and carbon emissions associated with individual developments .

1.1 Emissions Trading

Emissions Trading Schemes, as set out in the Kyoto Protocol, are reliant on market mechanisms of cap and trade to regulate carbon emissions. Different mechanisms are being used in different parts of the world, probably the most developed being the European Union ETS. In addition, the Kyoto Protocol allows for two types of initiatives that developed countries can undertake in developing countries, the Clean Development Mechanism ( CDM, projects approved by UN FCCC) and Joint Implementation (JI) projects. One Emissions Trading Unit ETU) is equivalent to 1 tonne reduction of CO2 emissions.

There are a few emerging problems with cap and trade schemes, such as

- the estimation techniques have a significant uncertainty.

- Many emitters and industries are not covered by the schemes

- Carbon leakage – a net increase in carbon emissions because of different pricing and regulation between countries, of which carbon polluters take advantage.

There are no guarantees that these schemes will be effective in the actual measurement and reduction of carbon emissions into earth’s atmosphere, because practically speaking, it is impossible to fully regulate a carbon market within the timescales required to be sure of limiting temperature rises to an acceptable range.

With the lesson of the failure of self-regulation by financial markets still fresh, it may well prove too risky to allow market forces to regulate carbon emission reduction.

A better strategy for carbon emissions governance is to selectively apply a regime of carbon emissions monitoring, particularly in the energy and transport industries, encouraging engagement and participation by all sectors of the corporate community. This may serve to actively promote a popular culture of carbon emissions reduction, by offering carbon emission reduction discounts.

1.2 Real-time monitoring and carbon discounts

By monitoring events that emit CO2, such as power consumption and transport, at an industry and household level, carbon reduction pricing incentives to reduce emissions, may ensure that there is enough popular support, required to be effective in limiting temperature rises.

Carbon taxes, discounts, and cap and trade schemes will all work more effectively if the end users are involved in monitoring mechanisms. Some of the obvious methods are

- Monitoring energy utilization in real-time and rewarding use of renewable energy with discounts.

- Road pricing based on distance driven, with discounts for greener cars, particularly electric vehicles (where emissions would be accounted for by energy supply utilization)

- Estimation and calculation of carbon emissions used to produced goods traded or sold, and new buildings and industrial developments.

This information could be published, and used as the basis for estimating carbon taxes.

The success of monitoring global carbon emissions reduction depends on a mechanism for ensuring that knowledge, experience and expertise in carbon emissions monitoring is shared, not only locally, but nationally and internationally.

1.3 The Role of Logical Models

To achieve this, it is important that a standard approach is developed to the collection of carbon monitoring data.

A modeled, knowledge-managed approach to the integration of real-time data on which carbon emissions can be calculated or estimated is key. Data could be collected from distributed sources, supplied by collaborative stakeholders based in multiple locations.

A common information model for key industries, such as Energy and Transport, is essential to ensuring that data can be aggregated from communities, regions, states, nations, and internationally.

Information analysis of telemetry and other metric data could take advantage of low cost information technology advances in dynamic software, hardware and network infrastructure.

And these carbon emission data collections could be shared on an industry, company and even household basis, via web portals.

1.4 Integrate with existing technology

A particularly effective place to start would be power utilization, as this is where consumers could be given a choice of power source. Suppliers could offer discounts for use of renewable energy, creating incentives for moving away from fossil fuel power generation. Discounts and consumption levels could by viewed by customers over web portals.

This would provide economic incentives for encouraging the production of renewable energy grids, such as the current initiative for a clean energy super grid by Europe's North Sea countries (able to store power, effectively forming a giant renewable power station).

Telemetry devices are monitoring electricity consumption, transport mileage, water resource

utilization, to name a few. The quantity of information to be collected will expand exponentially over the next few years.

A knowledge managed approached, based around standard industry models, can be implemented on a centralized basis. Improvements in carbon emission methodologies could be communicated across the usual national and corporate boundaries, being made available on by federated web-content management.

1.5 Carbon Emissions Monitoring Model

As well as agreements such as Kyoto, information technology has to play a vital role to ensure that information is easily accessible and able to be aggregated across diverse industries and infrastructure.

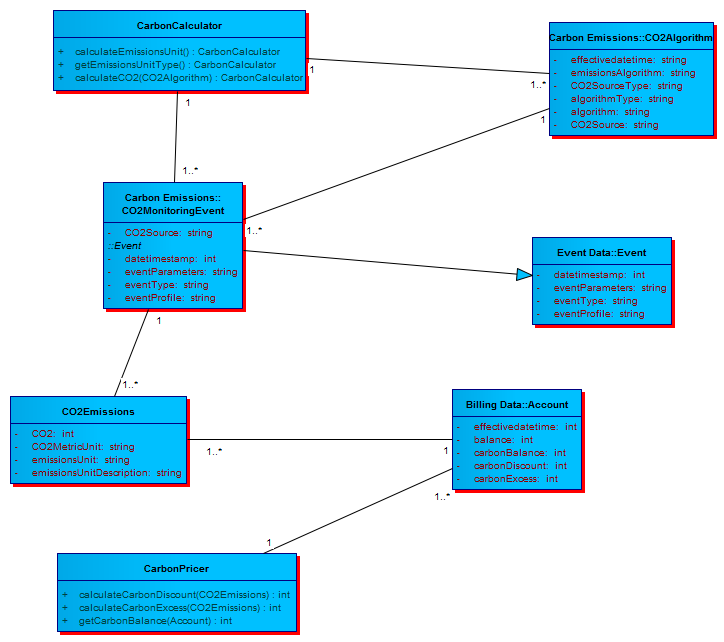

A core logical model that is deployable in a data integration technology, able to map current technology deployment patterns to information analysis of carbon emissions is a key component of international industry collaboration for publishing and sharing of information about carbon emissions.

As knowledge and experience on carbon emissions monitoring increase, optimal access to aggregated data in near real time will become possible, enabling immediate access to resource utilization and associated carbon emissions information, on demand.

It is essential to employ a common information technology model to map with current industry models, to collect data in real time. Without a core logical model, federating web content from different suppliers, governments, and consumers becomes too expensive and too difficult.

It cannot be said often enough that a Carbon Emissions Monitoring Model is a key participation component for effective short-term deployment of systems to monitor, calculate and price carbon emissions.

1.6 Model-Based Deployment

Time is running out on climate change action, it is absolutely essential, in the short term, to begin the process of being able to provide accurate metrics and statistics on carbon emissions, to enable assessment of targets, which must be lowered over time to meet accelerating risk.

Device technology is advancing rapidly. Telemetry devices can be remotely configured, and new information captured and processed, as it becomes available and useful. It is very important to make information access easy.

Data can be sent over any network, e.g. electricity supply, WiFi, UMTS, internet, corporate WAN/LAN, and aggregated centrally to provide dashboard style reporting. These technology capabilities can be meet by leading technology suppliers today.

Technology suppliers have to be capable of distributed, change-enabled, monitored, dynamic technology services, based on core logical models that can map to standard industry models.

Real-time cumulative costs, carbon emissions and resource accounting information could be displayed to registered users through a portal.

Data that is mapped to the Carbon Emissions Monitoring Model could be aggregated across regions, industries and other groupings to provide, for example, daily carbon emissions from power and transport.

This addresses the real challenge for Carbon Emissions Trading scheme ‘Carbon Leakage’, (the increase in CO2 in countries because of decreases in other countries).

Accurate power and transport real-time monitoring data can be aggregated, and extrapolated to provide carbon emissions statistics.

A Carbon Emissions Monitoring Model would be a starting point to ensure that telemetry information which can provide accurate emissions data , can be linked with other carbon metrics readily and rapidly.

1.7 International Information Exchange

It is timely to envisage international exchanges of information for carbon trading, energy pricing, and inputs into climate change models, made securely and readily available to all user types, corporate, government and individual consumers.

A short-sighted approach to the technology applied to emissions trading schemes will be a huge inhibitor to accurate carbon emissions reduction monitoring. Near enough is not good enough. The risks of inaccurate monitoring associated with inaccurate estimation methodologies are too high, in the context of the uncertainties of temperature rises and climate change that accompanies increasing levels of greenhouse gases in the atmosphere.

Global collaboration must be built into the monitoring mechanisms from inception.

Carbon monitoring initiatives be designed to utilize a best of breed technology approach, with the emphasis on carbon emissions measurement to replace estimation methodologies.

It is clear that the current emissions trading schemes cannot be relied upon to accurately estimate or forecast temperature rises that produce climate change and extreme weather events associated with rising greenhouse gas emission levels.

1.8 Utilization of Existing Technology

There are a number of technology options for gathering information from telemetry data collected in real-time from telemetry devices, to be aggregated with estimated carbon emissions to provide a fuller picture of carbon emissions reduction.

Whatever technology is deployed in collecting data, it is the data integration that is critical to the success of providing emission information

For data integration to be cost-effective and able to use any technology, the most important factor is to ensure that industry models can be mapped to a deployable logical model for the collection of real-time emissions event data.

Once the sample size is large enough, the real-time data collections could be used to extrapolate carbon emissions in climate change models.

2 Opportunity to Real-Time Monitor CO2 Emissions

As people realize the importance of monitoring the world's resources, many new initiatives

from governments, corporations and the community, are emerging, with varying quality or no carbon emission estimates.

There is a pressing requirement to provide accurate measurements and calculations, which

would be facilitated by a systematic approach to information delivery.

Around the world, provision and planning has to be made for basic telemetry metrics, covering personal and industrial water utilization, energy usage by source, transport, agriculture production, and other carbon emissions.

2.1 Technology Already Exists

A collaborative effort by national and international stakeholders could provide a universal capability to aggregate and present accurate carbon emission statistics in near real time, using existing technology components.

The knowledge of how to build a telemetry integration platform out of existing technology could be shared with industry stakeholders.

Information management is currently a critical problem in large organizations. Information has to be accessed and shared across organization and technology boundaries.

Information sharing is critical for the monitoring and management of environmental change.

Carbon emissions monitoring is something that will probably become mandatory through international treaties and agreements within the next couple of years.

Current broadband speeds provide a unique opportunity to facilitate a methodical approach to carbon emissions reduction monitoring, by real-time aggregation of data.

With proper forward planning and technology models, it is possible to model and organize the analysis and aggregation of telemetry data, from many different sources, to begin to understand the real carbon cost of resource utilization, including electricity, water, transport, agriculture and manufacture, to name the major sources of carbon emissions.

2.2 Federate Information

A federated technology approach, that is, using currently existing technology components from stakeholder organizations, integrated by a central federating capability could provide a relatively low cost carbon emissions data analysis and statistics capability.

Information access to just in time data will become essential to facilitate international climate

change targets and measures to reduce carbon emissions.

A well planned, catalogue of information access requirements, mapped to a central logical carbon emissions model, will provide the basis for information integration technology for local, regional and national governments. This technology can be collaborative and shared across organization boundaries based on user profiles.

2.3 Central Logical Model

A central logical model is essential to ensure that the common problems of current technology integration deployments, are bypassed (such as large cost overruns and failures due to poor architecture, and systematic unresponsiveness to changing requirements). A central model allows for efficient interfaces between unlike systems, and facilitates technology services across organization boundaries.

Forward resource planning, and appropriate business and technical models have to be identified and determined in advance, for an effective solution to sharing information.

A best practices approach would work to ensure that information access services would be governed by measurable Service Level Agreements, and that these metrics would be subject to governance, tracked to supplier delivery.

2.4 Service Level Agreements

An example of business services, to which SLAs apply, could perhaps be:

1. Provide telemetry data transmissions over a network every 60 seconds

- Provide web portal access to energy usage and associated carbon emissions data

- Aggregate emissions reduction data daily by local area by region and by country

The federation and knowledge management capability of telemetry integration could be

provided as a collaborative project, to optimize delivery efficiency and cost.

Power and transport monitoring components, for example, could be supplied internally or outsourced, based on service level agreements, and shared across industry sectors.

A model based approach, if implemented correctly, is flexible. For example, when a new

energy supplier is added to an energy grid, telemetry data could be automatically updated to

reflect the new supply source, and the associated requirements for increased processing

capacity and data storage can be automatically flagged to the network and infrastructure technology services.

But only if the model is the basis for the technology design and deployment, as retro fitting models is much less efficient, and much more expensive.

3 Recommendation with Risk Evaluation

The risks for the climate stability of the planet are huge. Effective governance of large carbon emitters, and cap and trade schemes for carbon pollution will be compromised by carbon leakage in the short to medium term.

It is clearly never too early to initiate a technology platform to improve the accuracy of carbon emission reduction monitoring.

An incremental distributed technology approach is a cost effective way to deliver information that will become increasingly accurate because of a collaborative knowledge base, eventually able to be shared internationally.

Real-time telemetry information can be aggregated with carbon calculation algorithms to provide more accurate information than existing methodologies.

Involvement of the whole community in carbon emissions reduction monitoring is a smart move, that will help the corporate large carbon emitters to self regulate, and reducing carbon leakage through the pressure of public opinion.

Collaborative industry efforts to real-time monitor the source of carbon emissions is required to limit global temperature rises.

The technology approach, if best practice, will enable knowledge sharing and co-operative technology development.

The fact that existing technology can be used as components of information integration is

clearly cost-effective.

4 Use of Sparx Enterprise Architect for Technology Models

The most important criteria for building core logical models for carbon emissions monitoring are

- Ability to use UML, the most widely used modelling language

- Ability to model from concept to code i.e.

a) Business vision, concepts and requirements

b) Business processes, workflow and role definition

c) Technology service identification

d) Technology implementation and deployment

- Ability to auto generate

a) Deployable logical metadata from information architecture

b) Multiple language source code, e.g Java, C#

c) Multiple class transformations e.g. XML, WSDL.

These capabilities are key to providing information across organization boundaries, because they provide a common communications framework which can be versioned and change managed for collaborative projects, enabling synchronous deployment across multiple stakeholders on shared infrastructure.

To cover the scope of highly complex technology modeling, domain models provide a catalogue for technology deployment patterns.

A useful catgorization is to identify common technology infrastructure from specialist applications.

- Technology Application Domains

- Technology Platform Domains

Doing so provides a starting point for the reuse of technology patterns, and also provides a coherent theme for technology integration.

Figures 2 and 3 illustrate examples of these domains.

Sparx Enterprise Architect is a techology of choice for the particular circumstances of collaboration of Model Driven Architecture (http://www.omg.org/mda/ ) amongst business and technology stakeholders from multiple organizations. It is readily available, easy to deploy, cost-effective and compared with other products, easy to use.

The example figures used in this paper are extracts from Telemetry Services UML Model (http://www.trac-car.com.au/Telemetry Services Model Version 4.0 HTML/index.htm)

Figure 2: Example Application Domain Model

Figure 3: Example Technology Platform Domain Model

5 Implementation Overview and Accountabilities

The implementation of a flexible, change enabled telemetry integration capability

is undertaken as a planning process based on a central set of models, themselves subject to

change management and governance.

A programme plan could be developed as a result of a collaborative feasibility study with the

organization accepting responsibility for co-ordination of stakeholder effort. This process would be expected to take 6-12 weeks, depending on scale and complexity of the technology capability involved, and the level of commitment available from stakeholders.

An initial proof-of-concept could be deployed, building selected services from the model to an agreed plan. Subsequent deployment would be incremental, as resources become available.

A business and technology governance core group would be required to ensure that adherence

to agreed standards and processes is achieved.

A good place to start might be a proof-of-concept based on telemetry metrics of power transmission by energy source by power supplier.

This would involve definition of Service Level Agreements for the required service performance and other metrics, and selection and evaluation of suppliers by those

criteria.

Full technology deployment could be achieved with the advice of appropriate business and technology subject matter experts.

Accountabilities are largely predicated on having a strong and viable governance mechanism,

administered by a core steering group, facilitated by specialist knowledge, automated by event processing, data integration and web federation technologies.

The following table outlines the basic steps and accountabilities required to deploy a carbon emissions monitoring integration capability.

Human and financial resources could be distributed across participant organizations.

|

Activity |

Accountabilities |

Time Estimate (days) |

|

Feasibility Study |

Telemetry Integration Model implementers Organisation stakeholders Technology suppliers |

60 |

|

Strategic Programme Plan |

Organisation stakeholders Programme planners |

30 |

|

Pilot Telemetry Integration Platform |

Internal and external technology integration suppliers Organisation stakeholders Programme planners |

120 |

|

Full deployment of Telemetry Integration Capability |

Internal and external technology integration suppliers Organisation stakeholders Programme planners |

Ongoing |

6 Technology Deployment

There best practice basic approach to building information integration in the current technology can be categorized as distributed incremental services, connected by a central federation capability for integration of data , using existing technology.

By implementation of data integration and information access, to a centralized model, with SLAs and governance for technology suppliers, a reliable set of technology services could deliver data just-in-time for rating, billing, and calculation of discounts for reduced carbon emissions.

By sharing technology knowledge and practice, infrastructure clouds and grids could be provisioned to provide the processing power to deliver information in real-time.

6.1 Timescales

This approach could be implemented by an incremental delivery of services. A meaningful output from a proof-of-concept can be delivered within 3 months. A core integration information federation capability could be delivered within a further 6 months. After one year, distributed information access services would be available to provide aggregation of telemetry information from energy suppliers, and providing a catalogue of carbon emissions model algorithms by industry. Subsequent service delivery would be incremental and relatively resource efficient.

By engaging in a collaborative information access process, essential feedback could be delivered across stakeholder communities, with group notifications based on user profile, promoting knowledge sharing for timely problem solving.

Costs would be contained by stakeholders supplying components from already existing technology.

6.2 Solution Architecture Assessment

This solution is an emerging incremental approach, designed to address the shortcomings of earlier technology deployments. It is also comparatively low cost, as costs could be shared amongst stakeholder organizations.

It allows for best practice technology components, built by many suppliers, linked

together to make a cohesive whole, based on specific technology services subject to binding supplier service level contracts.

The core of this technology is the federation capability, to bring together the distributed

components.

The basic premise is to utilize standard technology approaches, however, planning and

building incrementally within a methodology which seeks to optimize available technology components, linked by standard interfaces based on coherent information models.

The technology services can be knowledge managed and tracked to business requirements by way of Service Level Agreements based on metrics formed from practical experience.

6.3 Information Management with a Model

Information management of services, interfaces and performance is effected by

central models, which also provide knowledge management of collaborative development, tracked through workflows accessed by users with appropriate access privileges.

Collaborative program management and good communication work well with this approach.

These attributes are facilitated by a model based approach to implementation. A model based approach also enables and facilitates technology change. And interface mappings to a logical model can take advantage of technology that already works well.

Technology service suppliers are more readily able to be selected on the basis of the technology evaluation criteria documented as Service Level Agreements, which reflect performance requirements and other metrics for the type of business service involved.

Secure web access to a knowledge base can be automated by business and technical user profiles mapped to the central model.

Business requirements can be mapped to technology services, to provide traceability and accountability, and readily knowledge managed by model catalogue category.